Chapter 9: Evolutionary Computing

Time flies like an arrow; fruit flies like a banana.

—Unknown

For centuries, pottery created by the Ancestral Puebloans and Mogollon cultures of the southwestern United States and northern Mexico has held great significance in both ceremonial and everyday contexts. Techniques and design elements like those used to create this Chaco Ancestral Pueblo bowl are passed down through generations, with each potter learning, preserving, and subtly adapting these designs. This ongoing process gives rise to a continually evolving tapestry of familial and cultural expression.

Take a moment to think back to a simpler time, when you wrote your first p5.js sketches and life was free and easy. Which fundamental programming concept did you likely use in those first sketches and continue to use over and over again to this day? Variables. Variables allow you to save data and reuse it while a program runs.

Of course, this is nothing new. In this book, you’ve moved far beyond sketches with just one or two simple variables, working up to sketches organized around more complex data structures: variables holding custom objects that include both data and functionality. You’ve used these complex data structures—classes—to build your own little worlds of movers and particles and vehicles and cells and trees. But there’s been a catch: in each and every example in this book, you’ve had to worry about initializing the properties of these objects. Perhaps you made a whole set of particles with random colors and sizes, or a list of vehicles all starting at the same (x, y) position.

What if, instead of acting as an intelligent designer, assigning the properties of the objects through randomness or thoughtful consideration, you could let a process found in nature—evolution—decide the values for you? Can you think of the variables of a JavaScript object as the object’s DNA? Can objects give birth to other objects and pass down their DNA to a new generation? Can a p5.js sketch evolve?

The answer to all these questions is a resounding yes, and getting to that answer is the focus of this chapter. After all, this book would hardly be complete without tackling a simulation of one of the most powerful algorithmic processes found in nature itself, biological evolution. This chapter is dedicated to examining the principles behind evolutionary processes and finding ways to apply those principles in code.

Genetic Algorithms: Inspired by Actual Events

The primary means for developing code systems that evolve are genetic algorithms (GAs for short), which are inspired by the core principles of Darwinian evolutionary theory. In these algorithms, populations of potential solutions to a problem evolve over generations through processes that mimic natural selection in biological evolution. While computer simulations of evolutionary processes date back to the 1950s, much of our contemporary understanding of GAs stems from the work of John Holland, a professor at the University of Michigan whose 1975 book Adaptation in Natural and Artificial Systems (MIT Press) pioneered GA research. Today, GAs are part of a wider field that’s often referred to as evolutionary computing.

To be clear, GAs are only inspired by genetics and evolutionary theory, and aren’t intended to precisely implement the science behind these fields. As I explore GAs in this chapter, I won’t be making Punnett squares (sorry to disappoint), and there will be no discussion of nucleotides, protein synthesis, RNA, or other topics related to the biological processes of evolution. I don’t care so much about creating a scientifically accurate simulation of evolution as it happens in the physical world; rather, I care about methods for applying evolutionary strategies in software.

This isn’t to say that a project with more scientific depth wouldn’t have value. In fact, a whole field of computational biology research does take on the challenge of more accurately simulating biological evolutionary processes! I encourage readers with a particular interest in this topic to explore possibilities for expanding the examples provided with additional evolutionary features. Nevertheless, for the sake of keeping the projects manageable, I’m going to stick to the basics. And as it happens, the basics will be plenty complex and exciting.

I should also note that, strictly speaking, the term genetic algorithm refers to a specific algorithm implemented in a specific way to solve specific sorts of problems, and not all those specifics are important to this book. While the formal GA will serve as the foundation for the examples in this chapter, I won’t make a fuss about implementing the algorithm with perfect accuracy, given that I’m looking for creative applications of evolutionary theory in code. As such, this chapter will be broken into the following three parts:

- Traditional genetic algorithm: I’ll begin with the traditional, textbook GA. This algorithm was developed to solve problems in computer science for which the solution space is so vast that a brute-force algorithm would take too long. Here’s an example: I’m thinking of a number between one and one billion. How long will it take you to guess it? With a brute-force approach, you’d have to check every possible solution. Is it one? Is it two? Is it three? Is it four? . . . Luck plays a factor here (maybe I happened to pick five!), but on average, you would end up spending years counting up from one before hitting the correct answer. However, what if I could tell you whether your answer was good or bad? Warm or cold? Very warm? Hot? Ice frigid? If you could evaluate how close (or fit) your guesses are, you could start picking numbers accordingly and arrive at the answer more quickly. Your answer would evolve.

- Interactive selection: After exploring the traditional computer science version, I’ll examine other applications of GAs in the visual arts. Interactive selection refers to the process of evolving something (often a computer-generated image) through user interaction. Let’s say you walk into a museum gallery and see 10 paintings. With interactive selection, you might pick your favorites and allow an algorithmic process to generate (or evolve) new paintings based on your preferences.

- Ecosystem simulation: The traditional computer science GA and interactive selection technique are what you’ll likely find if you search online or read a textbook about artificial intelligence. But as you’ll soon see, they don’t really simulate the process of evolution as it happens in the physical world. In this chapter, I’ll also explore techniques for simulating evolution in an ecosystem of artificial creatures. How can the objects that move about a canvas meet each other, mate, and pass their genes on to a new generation? This could apply directly to the Ecosystem Project outlined at the end of each chapter. It will also be particularly relevant as I explore neuroevolution in Chapter 11.

Why Use Genetic Algorithms?

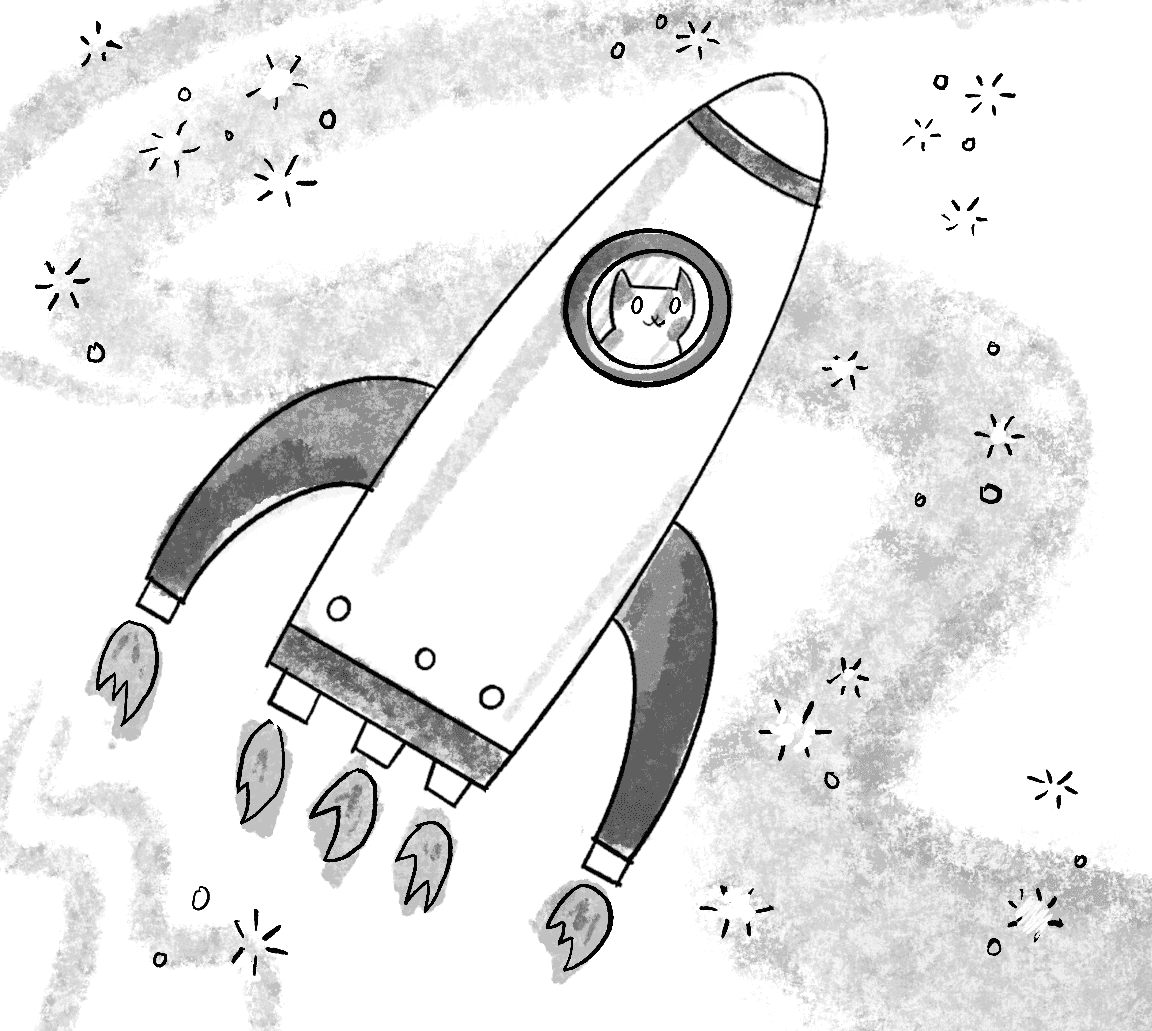

To help illustrate the utility of the traditional GA, I’m going to start with cats. No, not just your everyday feline friends. I’m going to start with some purr-fect cats that paw-sess a talent for typing, with the goal of producing the complete works of Shakespeare (Figure 9.1).

This is my meow-velous twist on the infinite monkey theorem, which is stated as follows: a monkey hitting keys randomly on a typewriter will eventually type the complete works of Shakespeare, given an infinite amount of time. It’s only a theory because in practice the number of possible combinations of letters and words makes the likelihood of the monkey actually typing Shakespeare minuscule. To put it in perspective, even if the monkey had started typing at the beginning of the universe, the probability that by now it would have produced just Hamlet, to say nothing of the entire works of Shakespeare, is still absurdly unlikely.

Consider a cat named Clawdius. Clawdius types on a reduced typewriter containing only 27 characters: the 26 English letters plus the spacebar. The probability of Clawdius hitting any given key is 1 in 27.

Next, consider the phrase “to be or not to be that is the question” (for simplicity, I’m ignoring capitalization and punctuation). The phrase is 39 characters long, including spaces. If Clawdius starts typing, the chance he’ll get the first character right is 1 in 27. Since the probability he’ll get the second character right is also 1 in 27, he has a 1 in 729 () chance of landing the first two characters in correct order. (This follows directly from our discussion of probability in Chapter 0.) Therefore, the probability that Clawdius will type the full phrase is 1 in 27 multiplied by itself 39 times, or . That equals a probability of . . .

Needless to say, even hitting just this one phrase, let alone an entire play, let alone all of Shakespeare’s 38 plays (yes, even The Two Noble Kinsmen) is highly unlikely. Even if Clawdius were a computer simulation and could type a million random phrases per second, for Clawdius to have a 99 percent probability of eventually getting just the one phrase right, he would have to type for 9,719,096,182,010,563,073,125,591,133,903,305,625,605,017 years. (For comparison, the universe is estimated to be a mere 13,750,000,000 years old.)

The point of all these unfathomably large numbers isn’t to give you a headache, but to demonstrate that a brute-force algorithm (typing every possible random phrase) isn’t a reasonable strategy for arriving randomly at “to be or not to be that is the question.” Enter GAs, which start with random phrases and swiftly find the solution through simulated evolution, leaving plenty of time for Clawdius to savor a cozy catnap.

To be fair, this particular problem (to arrive at the phrase “to be or not to be that is the question”) is a ridiculous one. Since you know the answer already, all you need to do is type it. Here’s a p5.js sketch that solves the problem:

let s = "to be or not to be that is the question";

console.log(s);

Nevertheless, it’s a terrific problem to start with since having a known answer will allow you to easily test the code and evaluate the success of the GA. Once you’ve successfully solved the problem, you can feel more confident in using GAs to do something actually useful: solving problems with unknown answers. This first example serves no real purpose other than to demonstrate how GAs work. If you test the GA results against the known answer and get “to be or not to be,” then you’ve succeeded in writing a GA.

Exercise 9.1

Create a sketch that generates random strings. You’ll need to know how to do this in order to implement the GA example that will shortly follow. How long does it take for p5.js to randomly generate the string cat? How might you adapt this to generate a random design using p5.js’s shape-drawing functions?

How Genetic Algorithms Work

Before I get to any code, I’d like to walk through the steps of the classic GA in a more general way. I’ll illustrate how a population of creatures (a generic term for the elements of a simulation) can evolve over a series of generations. To understand how this works, it’s important to outline three core principles of Darwinian evolution. If natural selection is to occur in code as it does in nature, all three of these elements must be present:

- Heredity: There must be a mechanism that allows parent creatures in one generation to pass their traits down to child creatures in the next generation.

- Variation: There must be a variety of traits present in the population of creatures or a means to introduce variation for evolution to take place. Imagine a population of beetles that were exactly the same: same color, same size, same wingspan, same everything. Without any variety in the population, the children would always be identical to the parents and to each other. New combinations of traits could never occur, and nothing could evolve.

- Selection: There must be a mechanism by which some creatures have the opportunity to be parents and pass on their genetic information, while others don’t. This is commonly referred to as survival of the fittest. Take, for example, a population of gazelles that are chased by lions. The faster gazelles have a better chance of escaping the lions, increasing their chances of living longer, reproducing, and passing on their genetic information to offspring. The term fittest can be misleading, however. It’s often thought to mean biggest, fastest, or strongest, but while it can sometimes encompass physical attributes like size, speed, or strength, it doesn’t have to. The core of natural selection lies in whatever traits best suit an organism’s environment and increase its likelihood of survival and ultimately reproduction. Instead of asserting superiority, fittest can be better understood as “able to reproduce.” Take the Dolania americana (aka the American sand-burrowing mayfly), which is believed to have the shortest life span of any insect. An adult female lives for only five minutes, but as long as it has managed to deposit its egg in the water, it will pass its genetic information to the next generation. For the typing cats, a more fit cat, one that I will assign as more likely to reproduce, is one that has typed more characters present in a given phrase of Shakespeare.

I want to emphasize the context in which I’m applying these Darwinian concepts: a simulated, artificial environment where specific goals can be quantified, all for the sake of creative exploration. Throughout history, the principles of genetics have been used to harm those who have been marginalized and oppressed by dominant societal structures. I believe it is essential to approach projects involving GAs with careful consideration of the language used, and to ensure that the documentation and descriptions of the work are framed inclusively.

With these concepts established, I’ll begin walking through the GA narrative. I’ll do this in the context of typing cats. The algorithm will be divided into several steps that unfold over two parts: a set of conditions for initialization, and the steps that are repeated over and over again until the correct phrase is found.

Step 1: Population Creation

For typing cats, the first step of the GA is to create a population of phrases. I’m using the term phrase rather loosely to mean any string of characters. These phrases are the creatures of this example, though of course they aren’t very creature-like.

In creating the population of phrases, the Darwinian principle of variation applies. Let’s say for the sake of simplicity that I’m trying to evolve the phrase cat and that I have a population of three phrases:

| rid |

| won |

| hug |

Sure, these phrases have variety, but try to mix and match the characters every which way and you’ll never get cat. There isn’t enough variety here to evolve the optimal solution. However, if I had a population of thousands of phrases, all generated randomly, chances are that at least one phrase would have a c as the first character, one would have an a as the second, and one a t as the third. A large population will most likely provide enough variety to generate the desired phrase. (In step 3 of the algorithm, I’ll also demonstrate another mechanism to introduce more variation in case there isn’t enough in the first place.) Step 1 can therefore be described as follows:

Create a population of randomly generated elements.

Element is perhaps a better, more general-purpose term than creature. But what is the element? As you move through the examples in this chapter, you’ll see several scenarios; you might have a population of images or a population of vehicles à la Chapter 5. The part that’s new in this chapter is that each element, each member of the population, has virtual DNA, a set of properties (you could also call them genes) that describe how a given element looks or behaves. For the typing cats, for example, the DNA could be a string of characters. With this in mind, I can be even more specific and describe step 1 of the GA as follows:

Create a population of N elements, each with randomly generated DNA.

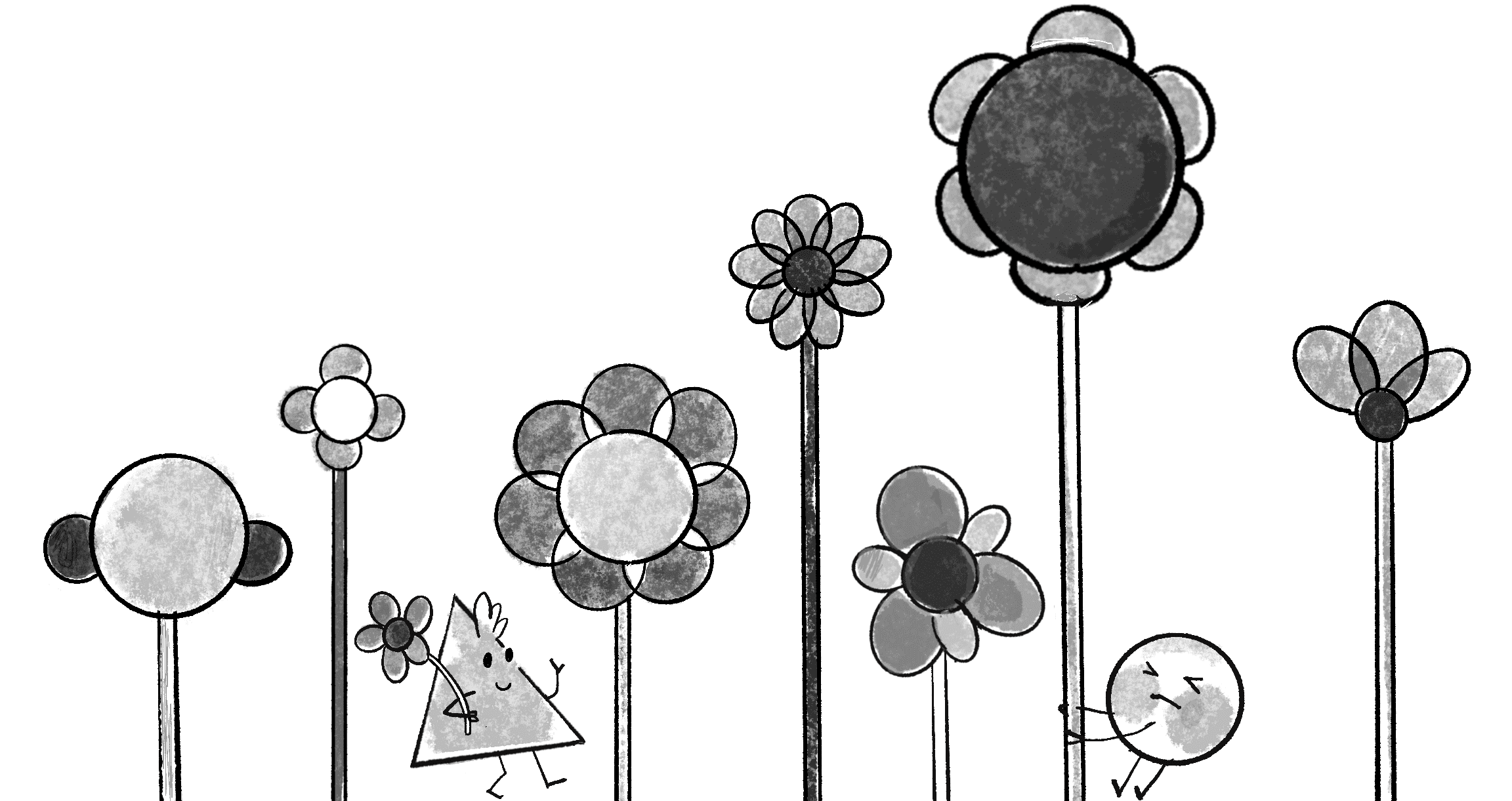

The field of genetics makes an important distinction between the concepts of genotype and phenotype. The actual genetic code—the particular sequence of molecules in the DNA—is an organism’s genotype. This is what gets passed down from generation to generation. The phenotype, by contrast, is the expression of that data—this cat will be big, that cat will be small, that other cat will be a particularly fast and effective typist.

The genotype/phenotype distinction is key to creatively using GAs. What are the objects in your world? How will you design the genotype for those objects—the data structure to store each object’s properties, and the values those properties take on? And how will you use that information to design the phenotype? That is, what do you want these variables to actually express?

We do this all the time in graphics programming, taking values (the genotype) and interpreting them in a visual way (the phenotype). The simplest example is probably color:

| Genotype | Phenotype |

|---|---|

| 0 | |

| 127 | |

| 255 |

Think of the genotype as the digital information, the data that represents color—in the case of grayscale values, an integer from 0 to 255. The way you choose to express the data is arbitrary: a red value, a green value, and a blue value. It doesn’t even need to be color at all—in a different approach, you could use the same values to describe the length of a line, the weight of a force, and so on:

| Same Genotype | Different Phenotype (Line Length) |

|---|---|

| 0 | |

| 127 | |

| 255 |

A nice aspect of the cat-typing example is that there’s no difference between genotype and phenotype. The DNA data is a string of characters, and the expression of that data is that very string.

Step 2: Selection

The second step of the GA is to apply the Darwinian principle of selection. This involves evaluating the population and determining which members are fit to be selected as parents for the next generation. The process of selection can be divided into two steps:

- Evaluate fitness.

- Create a mating pool.

For the first of these steps, I’ll need to design a fitness function, a function that produces a numeric score to describe the fitness of a given element of the population. This, of course, isn’t how the real world works at all. Creatures aren’t given a score; rather, they simply reproduce or they don’t reproduce. A traditional GA, however, aims to evolve an optimal solution to a problem, so a mechanism to numerically evaluate any given possible solution is required.

Consider the current scenario, the typing cats. Again, for simplicity, I’ll say the target phrase is cat. Assume three members of the population: hut, car, and box. Car is obviously the most fit, given that it has two correct characters in the correct positions, hut has only one, and box has zero. And there it is, a fitness function:

| DNA | Fitness |

|---|---|

| car | 2 |

| hut | 1 |

| box | 0 |

I’ll eventually want to look at examples with more sophisticated fitness functions, but this is a good place to start.

Once the fitness has been calculated for all members of the population, the next part of the selection process is to choose which members are fit to become parents and place them in a mating pool. This step has several approaches. For example, I could employ the elitist method and say, “Which two members of the population scored the highest? You two will make all the children for the next generation.” This is probably one of the easier methods to code, but it flies in the face of the principle of variation. If two members of the population (out of perhaps thousands) are the only ones available to reproduce, the next generation will have little variety, and this may stunt the evolutionary process.

I could instead make a mating pool out of a larger number of elements—for example, the top 50 percent of the population. This is another easy one to code, but it also won’t produce optimal results. In this case, the highest-scoring elements would have the same chance of being selected as the ones toward the middle. In a population of 1,000 phrases, why should the phrase ranked 500th have the same chance of reproducing as the phrase ranked 1st? For that matter, why should phrase 500 have a solid shot of reproducing, while phrase 501 has no shot at all?

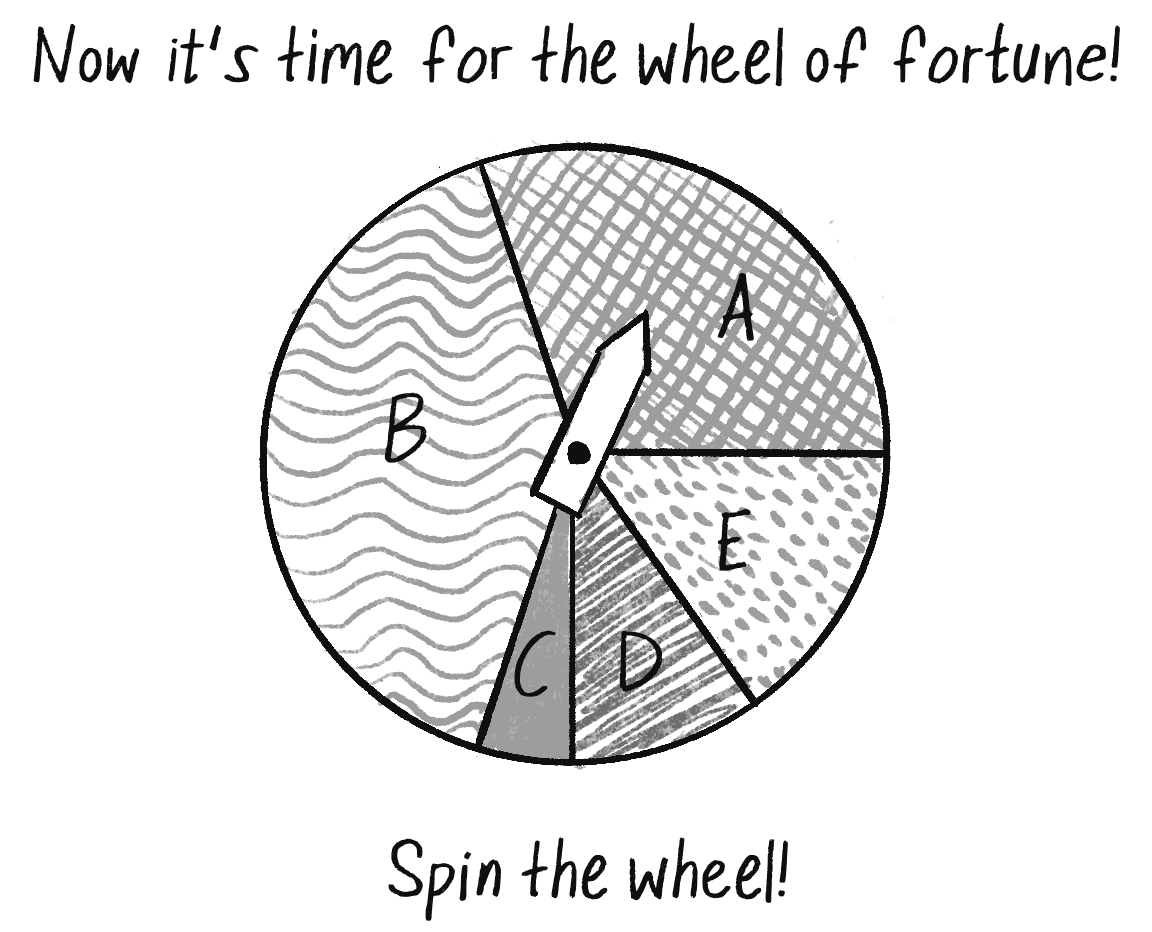

A better solution for the mating pool is to use a probabilistic method, which I’ll call the wheel of fortune (aka the roulette wheel). To illustrate this method, let’s say a population has five elements, each with a fitness score.

| Element | Fitness |

|---|---|

| A | 3 |

| B | 4 |

| C | 0.5 |

| D | 1 |

| E | 1.5 |

The first step is to normalize all the scores. Remember normalizing a vector? That involved taking a vector and standardizing its length, setting it to 1. Normalizing a set of fitness scores standardizes their range from 0 to 1, as a percentage of total fitness. For that, first add up all the fitness scores:

Next, divide each score by the total fitness, resulting in the normalized fitness.

| Element | Fitness | Normalized Fitness | Expressed as a Percentage |

|---|---|---|---|

| A | 3 | 0.3 | 30% |

| B | 4 | 0.4 | 40% |

| C | 0.5 | 0.05 | 5% |

| D | 1 | 0.1 | 10% |

| E | 1.5 | 0.15 | 15% |

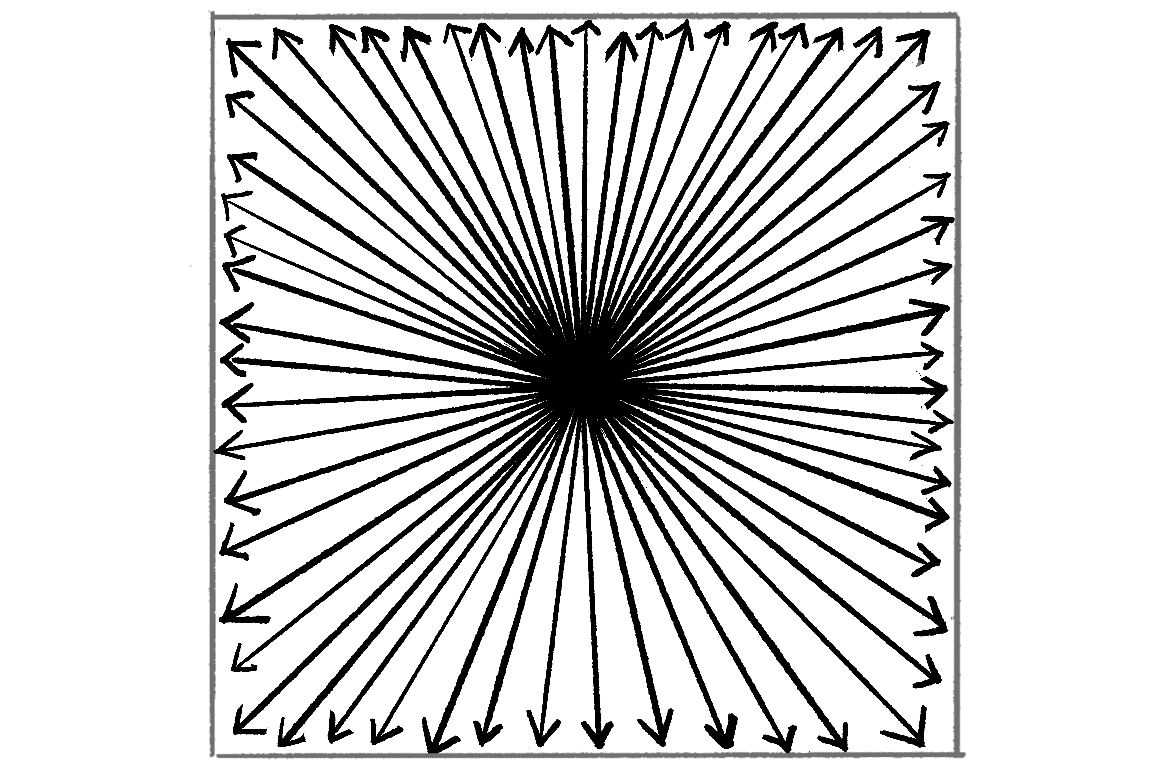

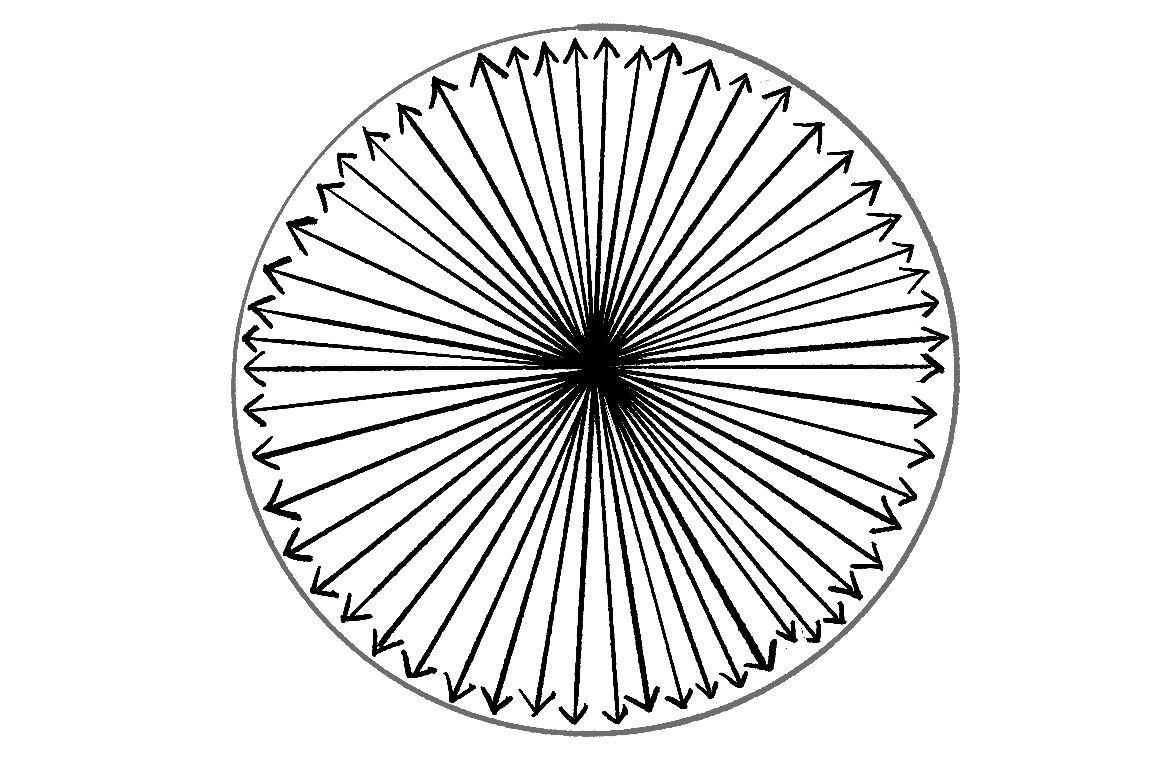

Now it’s time for the wheel of fortune, shown in Figure 9.2.

Spin the wheel and you’ll notice that element B has the highest chance of being selected, followed by A, then E, then D, and finally C. This probability-based selection according to fitness is an excellent approach. It guarantees that the highest-scoring elements will be most likely to reproduce, while also not entirely eliminating any variation from the population. Unlike with the elitist method, even the lowest-scoring element (in this case, C) has at least some chance of passing its information to the next generation. This is important because it’s quite possible (and often the case) that some low-scoring elements have tiny nuggets of genetic code that are truly useful and shouldn’t be removed from the population. For example, in the case of evolving “to be or not to be,” we might have the following elements:

| Element | DNA |

|---|---|

| A | to be or not to go |

| B | to be or not to pi |

| C | purrrrrrrrrrrrr be |

As you can see, elements A and B are clearly the most fit and would have the highest score. But neither contains the correct characters for the end of the phrase. Element C, even though it would receive a very low score, happens to have the genetic data for the end of the phrase. While I might want A and B to be picked to generate the majority of the next generation, I still want C to have a small chance to participate in the reproductive process too.

Step 3: Reproduction

Now that I’ve demonstrated a strategy for picking parents, the last step is to use reproduction to create the population’s next generation, keeping in mind the Darwinian principle of heredity—that children inherit properties from their parents. Again, numerous techniques could be employed here. For example, one reasonable (and easy-to-program) strategy is cloning, meaning just one parent is picked and an exact copy of that parent is created as a child element. As with the elitist approach to selection, however, this runs counter to the goal of variation. Instead, the standard approach with GAs is to pick two parents and create a child according to two steps:

- Crossover

- Mutation

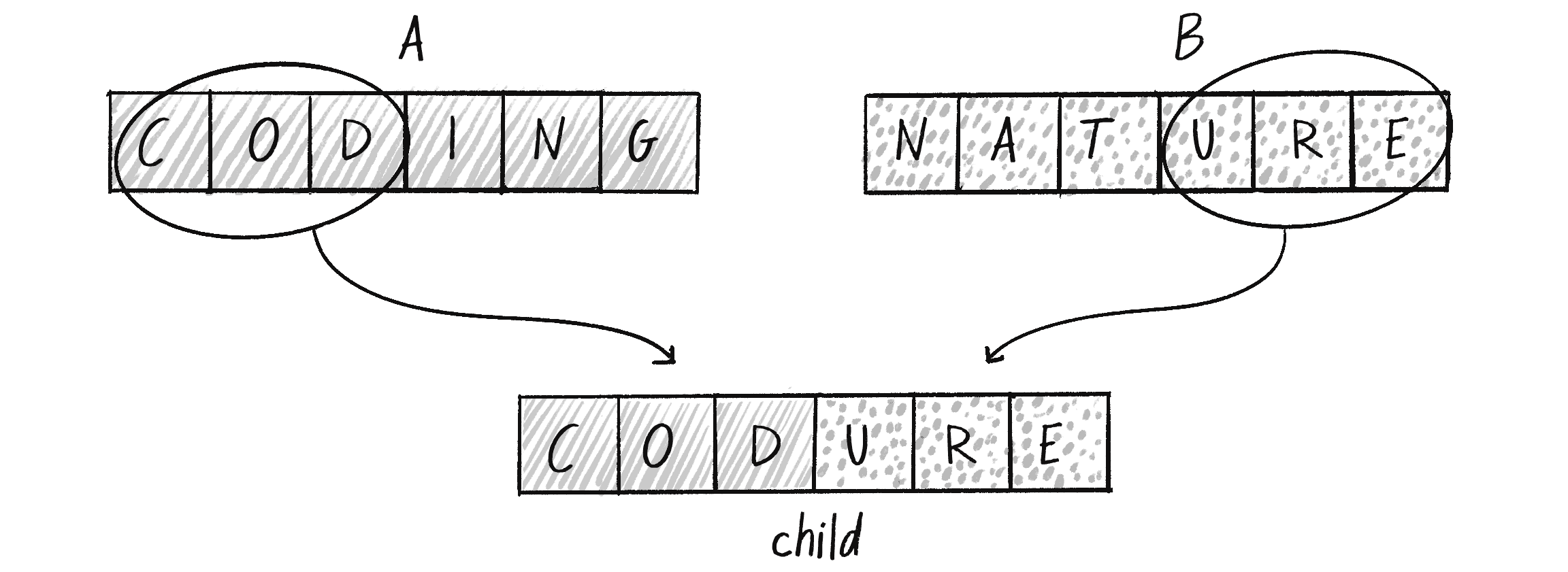

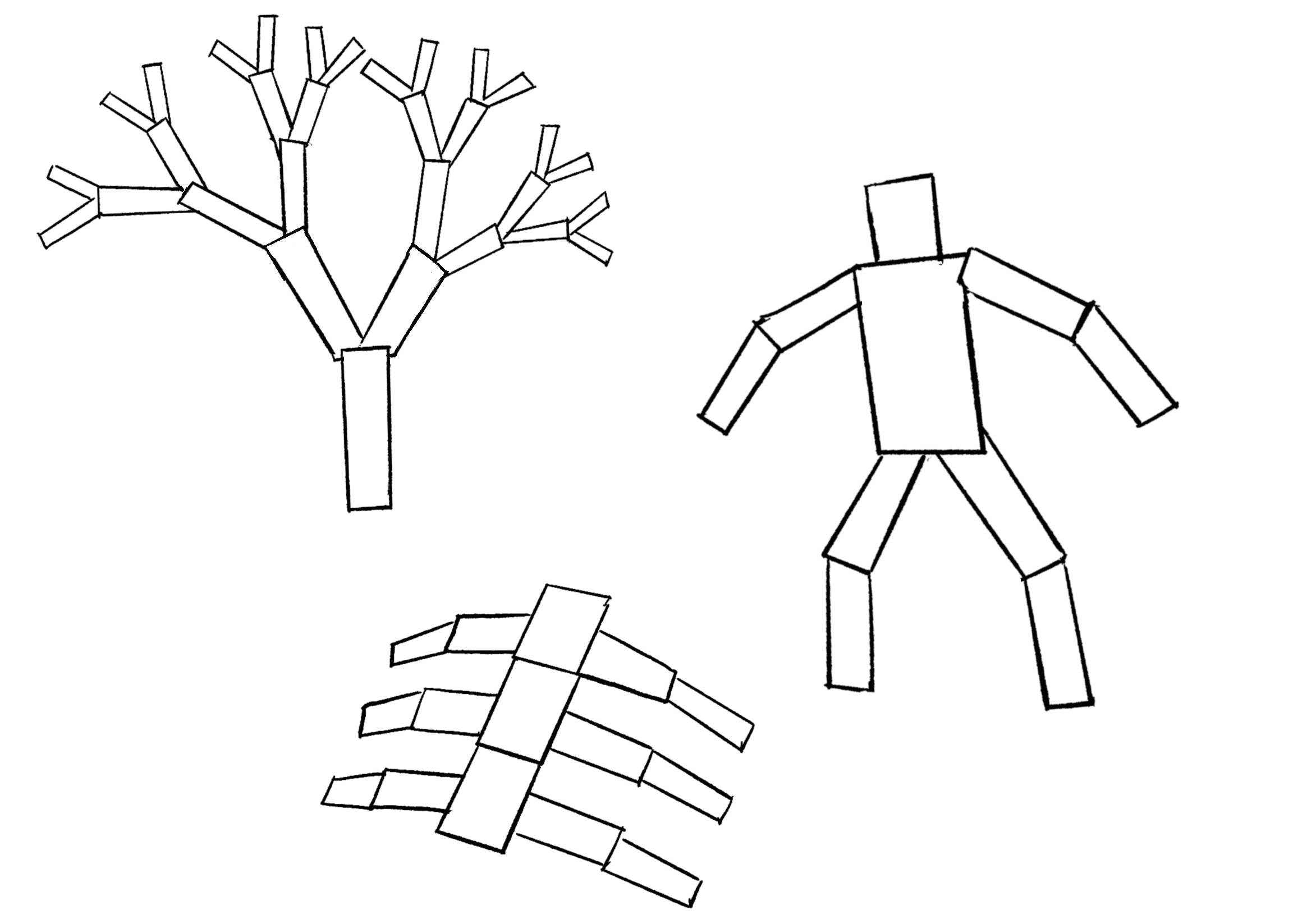

The first step, crossover, creates a child out of the genetic code of two parents. For the cat-typing example, say I’ve picked the following two parent phrases from the mating pool, as outlined in the selection step (I’m simplifying and using strings of length 6, instead of the 18 characters required for “to be or not to be”):

| Parent A | coding |

| Parent B | nature |

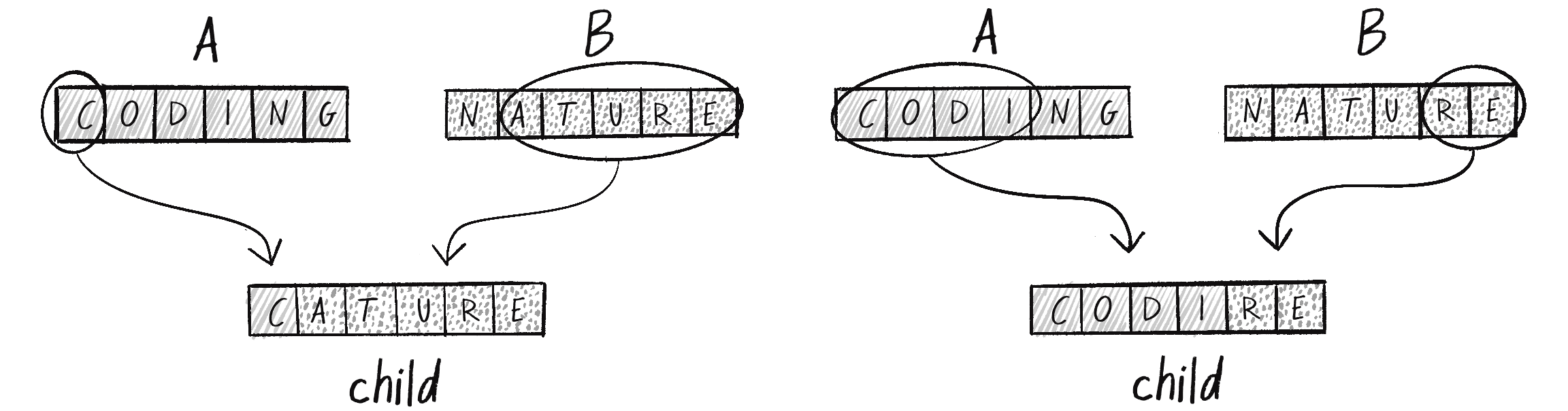

The task at hand is now to create a child phrase from these two. Perhaps the most obvious way (call it the 50/50 method) would be to take the first three characters from A and the second three from B, as shown in Figure 9.3.

A variation of this technique is to pick a random midpoint. In other words, I don’t always have to pick exactly half of the characters from each parent. I could use a combination of 1 and 5, or 2 and 4. This is preferable to the 50/50 approach, since it increases the variety of possibilities for the next generation (see Figure 9.4).

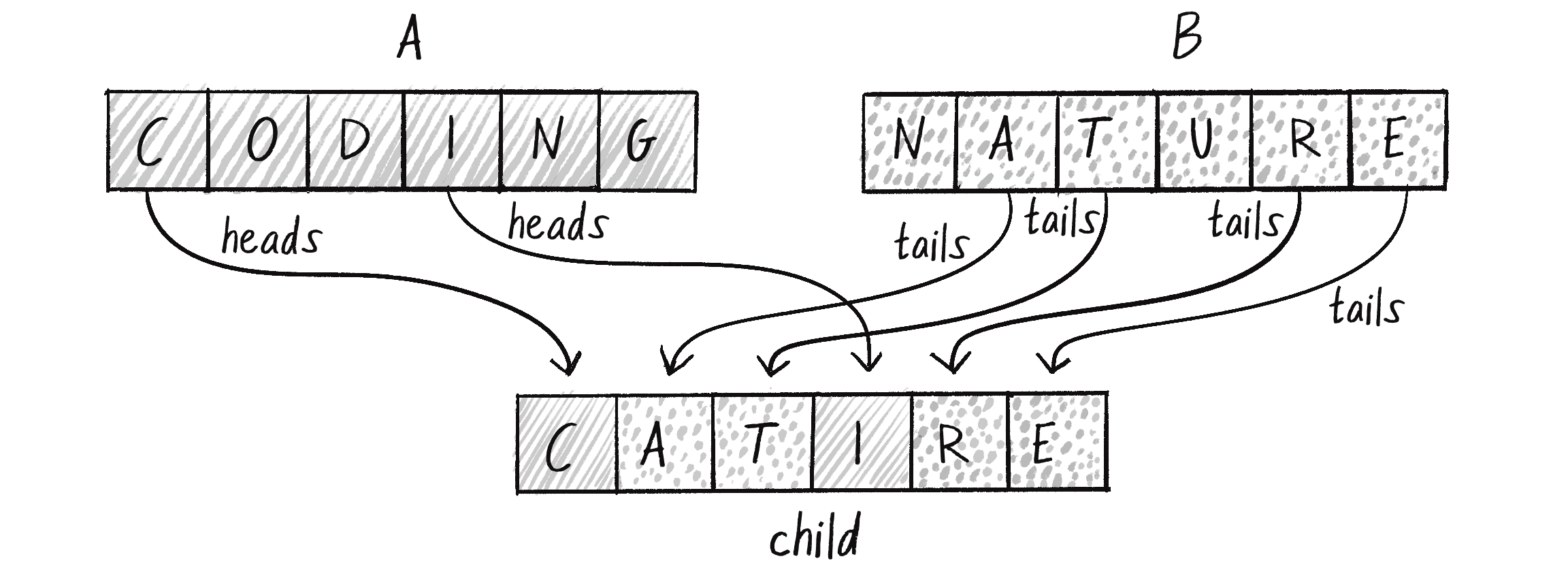

Another possibility is to randomly select a parent for each character in the child string, as in Figure 9.5. You can think of this as flipping a coin six times: heads, take a character from parent A; tails, from parent B. This yields even more possible outcomes: codurg, natine, notune, and so on.

This strategy won’t significantly change the outcome from the random midpoint method; however, if the order of the genetic information plays a role in the fitness function, you may prefer one solution over the other. Other problems may benefit more from the randomness introduced by the coin-flipping approach.

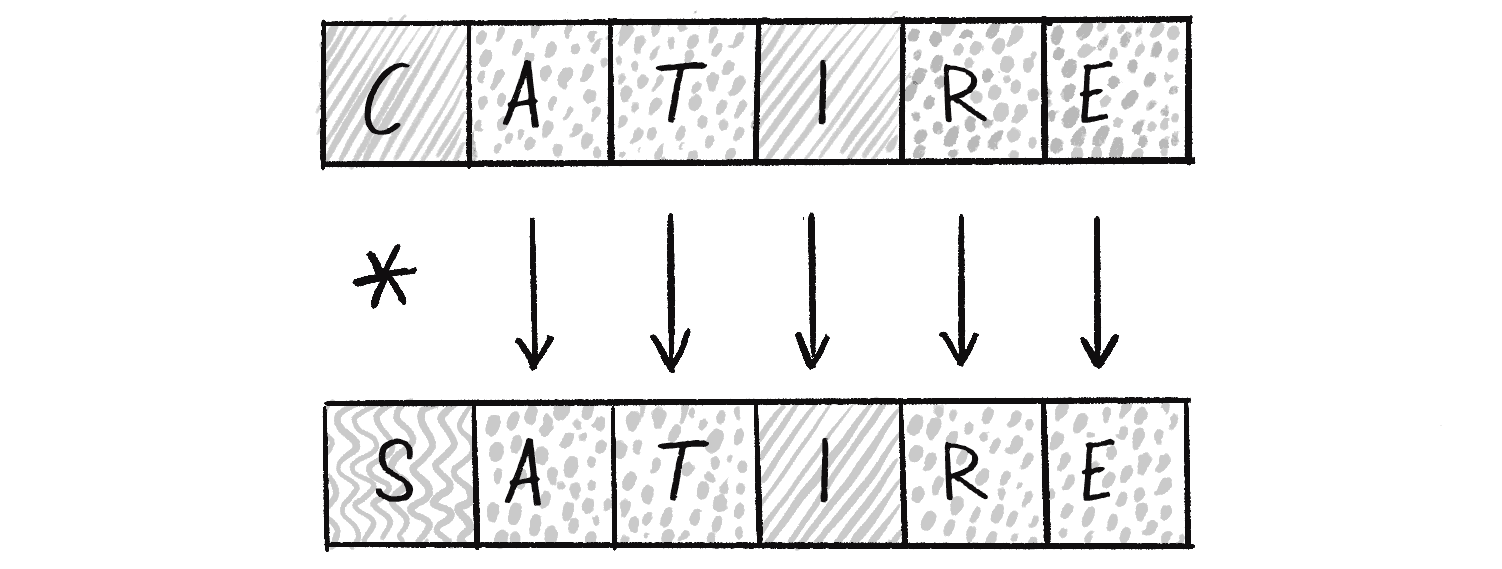

Once the child DNA has been created via crossover, an extra, optional process can be applied before adding the child to the next generation: mutation. This second reproduction stage is unnecessary in some cases, but it exists to further uphold the Darwinian principle of variation. The initial population was created randomly, ensuring a variety of elements at the outset. However, this variation is limited by the size of the population, and the variation narrows over time by virtue of selection. Mutation introduces additional variety throughout the evolutionary process.

Mutation is described in terms of a rate. A given GA might have a mutation rate of 5 percent, or 1 percent, or 0.1 percent, for example. Say I’ve arrived through crossover at the child phrase catire. If the mutation rate is 1 percent, this means that each character in the phrase has a 1 percent chance of mutating before being “born” into the next generation. What does it mean for a character to mutate? In this case, mutation could be defined as picking a new random character. A 1 percent probability is fairly low, so most of the time mutation won’t occur at all in a six-character string (about 94 percent of the time, in fact). However, when it does, the mutated character is replaced with a randomly generated one (see Figure 9.6).

As you’ll see in the coming examples, the mutation rate can greatly affect the behavior of the system. A very high mutation rate (such as, say, 80 percent) would negate the entire evolutionary process and leave you with something more akin to a brute-force algorithm. If the majority of a child’s genes are generated randomly, you can’t guarantee that the more fit genes occur with greater frequency with each successive generation.

Overall, the process of selection (picking two parents) and reproduction (crossover and mutation) is repeated N times until you have a new population of N child elements.

Step 4: Repetition!

At this point, the new population of children becomes the current population. Then the process returns to step 2 and starts all over again, evaluating the fitness of each element, selecting parents, and producing another generation of children. Hopefully, as the algorithm cycles through more and more generations, the system evolves closer and closer to the desired solution.

Coding the Genetic Algorithm

Now that I’ve described all the steps of the GA, it’s time to translate them into code. Before I dive into the details of the implementation, let’s think about how these steps fit into the overall standard structure of a p5.js sketch. What goes into setup(), and what goes into draw()?

setup()

Step 1, Initialization: Create a starting population of N elements, each with randomly generated DNA.

draw()

Step 2, Selection: Evaluate the fitness of each element of the population and build a mating pool.

Step 3, Reproduction: Repeat N times:

- Pick two parents with probability according to relative fitness.

- Crossover: Create a child by combining the DNA of these two parents.

- Mutation: Modify the child’s DNA based on a given probability.

- Add the new child to a new population.

Step 4: Replace the old population with the new population and return to step 2.

With this plan in place, I can start writing the code.

Step 1: Initialization

If I’m going to create a population, I need a data structure to store a list of elements in the population:

let population = [];

An array for the population of elements

Choosing an array to represent a list is straightforward, but the question remains: An array of what? An object is an excellent choice for storing the genetic information, as it can hold multiple properties and methods. These genetic objects will be structured according to a class that I’ll call DNA:

class DNA {

}

What should go in the DNA class? For a typing cat, its DNA would be the random phrase it types, a string of characters. However, using an array of characters (rather than a string object) provides a more generic template that can extend easily to other data types. For example, the DNA of a creature in a physics system could be an array of vectors—or for an image, an array of numbers (RGB pixel values). Any set of properties can be listed in an array, and even though a string is convenient for this particular scenario, an array will serve as a better foundation for future evolutionary examples.

The GA specifies that I create a population of N elements, each with randomly generated genes. The DNA constructor therefore includes a loop to fill in each element of the genes array:

class DNA {

constructor(length) {

this.genes = [];

The individual genes are stored in an array.

for (let i = 0; i < length; i++) {

There are length genes.

this.genes[i] = randomCharacter();

Each gene is a random character.

}

}

}

To randomly generate a character, I’ll write a helper function called randomCharacter() for each individual gene:

function randomCharacter() {

let c = floor(random(32, 127));

return String.fromCharCode(c);

}

Return a random character (letter, number, symbol, space, and so forth).

The random numbers picked correspond to a specific character according to a standard known as ASCII (American Standard Code for Information Interchange), and String.fromCharCode() is a native JavaScript method that converts a number into its corresponding character based on that standard. The range I’ve specified encompasses upper- and lowercase letters, numbers, punctuation marks, and special characters. An alternative approach could use the Unicode standard, which includes emojis and characters from various world languages, providing a more extensive range of characters for a different target string.

Now that I have the constructor, I can return to setup() and initialize each DNA object in the population array:

let population = [];

function setup() {

for (let i = 0; i < 100; i++) {

Initialize each element of the population; 100 and 18 are hardcoded for now as the population size and length of the genes array.

population[i] = new DNA(18);

}

}

The DNA class is not at all complete. I need to give it methods that perform all the other tasks in the GA. I’ll do that as I walk through steps 2 and 3.

Step 2: Selection

Step 2 reads, “Evaluate the fitness of each element of the population and build a mating pool.” I’ll start with the first part, evaluating each object’s fitness. Earlier I stated that one possible fitness function for the typed phrases is the total number of correct characters. Now I’ll revise this fitness function a little bit and state it as the percentage of correct characters—that is, the number of correct characters divided by the total number of characters:

Where should I calculate the fitness? Since the DNA class contains the genetic information (the phrase I will test against the target phrase), I can write a method inside the DNA class to score its own fitness. Let’s assume a target phrase:

let target = "to be or not to be";

I can now compare each gene against the corresponding character in the target phrase, incrementing a counter each time I find a correct character in the correct position. For example, a t is found in several places in target, but it increases the fitness only if it is in the genes array at the correct corresponding index:

class DNA {

constructor(length) {

this.genes = [];

this.fitness = 0;

Add a variable to track fitness.

for (let i = 0; i < length; i++) {

this.genes[i] = randomCharacter();

}

}

calculateFitness(target) {

let score = 0;

for (let i = 0; i < this.genes.length; i++) {

if (this.genes[i] === target.charAt(i)) {

score++;

}

}

this.fitness = score / target.length;

}

Compute fitness as a percentage of correct characters.

}

Since fitness is calculated for each subsequent generation, the very first step I’ll take inside the draw() loop is to call the fitness function for each member of the population:

function draw() {

for (let phrase of population) {

phrase.calculateFitness(target);

}

}

Once the fitness scores have been computed, the next step is to build the mating pool for the reproduction process. The mating pool is a data structure from which two parents are repeatedly selected. Recalling the description of the selection process, the goal is to pick parents with probabilities calculated according to fitness. The members of the population with the highest fitness scores should be the most likely to be selected; those with the lowest scores, the least likely.

In Chapter 0, I covered the basics of probability and generating a custom distribution of random numbers. I’m going to use the same techniques here to assign a probability to each member of the population, picking parents by spinning the wheel of fortune. Revisiting Figure 9.2, your mind might immediately go back to Chapter 3 and contemplate coding a simulation of an actual spinning wheel. As fun as this might be (and you should make one!), it’s quite unnecessary.

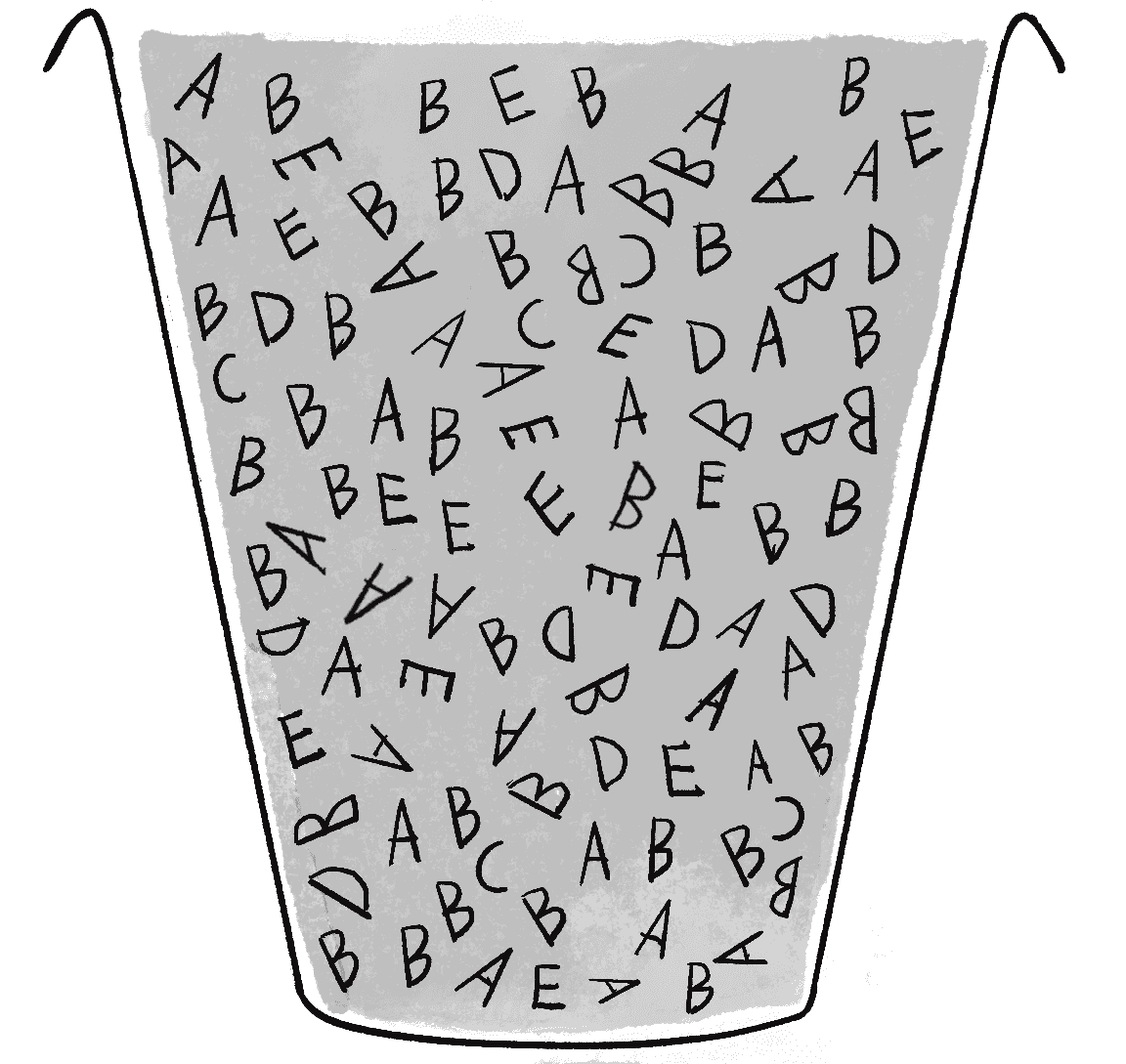

One solution that could work here is to pick from the five options depicted in Figure 9.2 (A, B, C, D, E) according to their probabilities by filling an array with multiple instances of each parent. In other words, imagine you have a bucket of wooden letters, as in Figure 9.7. Based on the earlier probabilities, it should contain 30 As, 40 Bs, 5 Cs, 10 Ds, and 15 Es. If you were to pick a random letter out of that bucket, you’d have a 30 percent chance of getting an A, a 5 percent chance of getting a C, and so on.

For the GA code, that bucket could be an array, and each wooden letter a potential parent DNA object. The mating pool is therefore created by adding each parent to the array a certain number of times, scaled according to that parent’s fitness score:

let matingPool = [];

Start with an empty mating pool.

for (let phrase of population) {

let n = floor(phrase.fitness * 100);

n is equal to fitness times 100.

100 is an arbitrary way to scale the percentage of fitness to a larger integer value.

for (let j = 0; j < n; j++) {

matingPool.push(phrase);

Add each member of the population to the mating pool n times.

}

}

With the mating pool ready to go, it’s time to select two parents! Picking two parents for each child is a somewhat arbitrary decision. It certainly mirrors human reproduction and is the standard means in the textbook GA, but in terms of creative applications, there really aren’t restrictions here. You could choose only one parent for cloning, or devise a reproduction methodology for picking three or four parents from which to generate child DNA. For this demonstration, I’ll stick to two parents and call them parentA and parentB.

I can select two random instances of DNA from the mating pool by using the p5.js random() function. When an array is passed as an argument to random(), the function returns a single random element from the array:

let parentA = random(matingPool);

let parentB = random(matingPool);

This method of building a mating pool and choosing parents from it works, but it isn’t the only way to perform selection. Other, more memory-efficient techniques don’t require an additional array full of multiple references to each element. For example, think back to the discussion of nonuniform distributions of random numbers in Chapter 0. There, I implemented the accept-reject method. If applied here, the approach would be to randomly pick an element from the original population array, and then pick a second, qualifying random number to check against the element’s fitness value. If the fitness is less than the qualifying number, start again and pick a new element. Keep going until two parents are deemed fit enough.

Yet another excellent alternative is worth exploring that similarly capitalizes on the principle of fitness-proportionate selection. To understand how it works, imagine a relay race in which each member of the population runs a given distance tied to its fitness. The higher the fitness, the farther they run. Let’s also assume that the fitness values have been normalized to all add up to 1 (just as with the wheel of fortune). The first step is to pick a starting line—a random distance from the finish. This distance is a random number from 0 to 1. (You’ll see in a moment that the finish line is assumed to be at 0.)

let start = random(1);

Then the relay race begins at the starting line with the first member of the population:

let index = 0;

The runner travels a distance defined by its normalized fitness score, then hands the baton to the next runner:

while (start > 0) {

start = start - population[index].fitness;

Move a distance according to fitness.

index++;

Pass the baton to the next element.

}

The steps are repeated over and over again in a while loop until the race ends (start is less than or equal to 0, the finish line). The runner who crosses the finish threshold is selected as a parent.

Here are all the steps together in a function that returns the selected element:

function weightedSelection() {

let index = 0;

Start with the first element.

let start = random(1);

Pick a starting point.

while (start > 0) {

At the finish line?

start = start - population[index].fitness;

Move a distance according to fitness.

index++;

Pass the baton to the next element.

}

index--;

return population[index];

Undo moving to the next element since the finish has been reached.

}

This works well for selection because every member has a shot at crossing the finish line (the elements’ fitness scores all add up to 1), but those who run longer distances (that is, those with higher fitness scores) have a better chance of making it there. However, while this method is more memory efficient, it can be more computationally demanding, especially for large populations, as it requires iterating through the population for each selection. By contrast, the original matingPool array method needs only a single random lookup into the array per parent.

Depending on the specific requirements and constraints of your application of GAs, one approach might prove more suitable than the other. I’ll alternate between them in the examples outlined in this chapter.

Exercise 9.2

Revisit the accept-reject algorithm from Chapter 0 and rewrite the weightedSelection() function to use accept-reject instead. Like the relay race method, this technique can also end up being computationally intensive, since several potential parents may be rejected as unfit before one is finally chosen.

Exercise 9.3

In some cases, the wheel-of-fortune algorithm will have an extraordinarily high preference for some elements over others. Take the following probabilities:

| Element | Probability |

|---|---|

| A | 98% |

| B | 1% |

| C | 1% |

This is sometimes undesirable, given that it will decrease the amount of variety in this system. A solution to this problem is to replace the calculated fitness scores with the ordinals of scoring (meaning their rank):

| Element | Rank | Probability |

|---|---|---|

| A | 1 | 50% (1/2) |

| B | 2 | 33% (1/3) |

| C | 3 | 17% (1/6) |

How can you implement an approach like this? Hint: You don’t need to modify the selection algorithm. Instead, your task is to calculate the probabilities from the rank rather than the raw fitness score.

For any of these algorithms, the same parent could be picked twice for a given child. If I wanted, I could enhance the algorithm to ensure that this isn’t possible. This would likely have very little impact on the end result, but it may be worth exploring as an exercise.

Exercise 9.4

Pick any of the weighted selection algorithms and adapt the algorithm to guarantee that two unique parents are picked.

Step 3: Reproduction (Crossover and Mutation)

Once I have the two parents, the next step is to perform a crossover operation to generate child DNA, followed by mutation:

let child = parentA.crossover(parentB);

A function for crossover

child.mutate();

A function for mutation

Of course, the crossover() and mutate() methods don’t magically exist in the DNA class; I have to write them. The way I’ve called crossover() indicates that it should receive an instance of DNA as an argument (parentB) and return a new instance of DNA, the child:

crossover(partner) {

let child = new DNA(this.genes.length);

The child is a new instance of DNA.

(Note that the genes are generated randomly in the DNA constructor,

but the crossover method will override the array.)

let midpoint = floor(random(this.genes.length));

Pick a random midpoint in the genes array.

for (let i = 0; i < this.genes.length; i++) {

if (i < midpoint) {

child.genes[i] = this.genes[i];

Before the midpoint, take genes from this DNA.

After the midpoint, take from the partner DNA.

} else {

child.genes[i] = partner.genes[i];

}

}

return child;

}

This implementation uses the random midpoint method of crossover, in which the first section of genes is taken from parent A and the second from parent B.

Exercise 9.5

Rewrite the crossover function to use the coin-flipping method instead, in which each gene has a 50 percent chance of coming from parent A and a 50 percent chance of coming from parent B.

The mutate() method is even simpler to write than crossover(). All I need to do is loop through the array of genes and randomly pick a new character according to the defined mutation rate. With a mutation rate of 1 percent, for example, a new character would be generated only 1 out of 100 times:

let mutationRate = 0.01;

if (random(1) < mutationRate) {

/* Any code here would be executed 1% of the time. */

}

The entire method therefore reads as follows:

mutate(mutationRate) {

for (let i = 0; i < this.genes.length; i++) {

Look at each gene in the array.

if (random(1) < mutationRate) {

Check a random number against the mutation rate.

this.genes[i] = randomCharacter();

Mutation means choosing a new random character.

}

}

}

Once again, I’m able to use the randomCharacter() helper function to simplify the mutation process.

Putting It All Together

I’ve now walked through the steps of the GA twice—once describing the algorithm in narrative form, and another time with code snippets implementing each of the steps. Now I’m ready to put it all together and show you the complete code alongside the basic steps of the algorithm.

Example 9.1: Genetic Algorithm for Evolving Shakespeare

let mutationRate = 0.01;

Mutation rate

let populationSize = 150;

Population size

let population = [];

Population array

let target = "to be or not to be";

Target phrase

function setup() {

createCanvas(640, 360);

for (let i = 0; i < populationSize; i++) {

population[i] = new DNA(target.length);

}

Step 1: Initialization

}

function draw() {

Step 2: Selection

for (let phrase of population) {

phrase.calculateFitness(target);

}

Step 2a: Calculate fitness.

let matingPool = [];

Step 2b: Build the mating pool.

for (let phrase of population) {

let n = floor(phrase.fitness * 100);

for (let j = 0; j < n; j++) {

matingPool.push(phrase);

}

Add each member n times according to its fitness score.

}

for (let i = 0; i < population.length; i++) {

let parentA = random(matingPool);

let parentB = random(matingPool);

Step 3: Reproduction

let child = parentA.crossover(parentB);

Step 3a: Crossover

child.mutate(mutationRate);

Step 3b: Mutation

population[i] = child;

Note that you are overwriting the population with the new

children. When draw() loops, you will perform all the same

steps with the new population of children.

}

Step 4: Repetition. Go back to the beginning of draw()!

}

The sketch.js file precisely mirrors the steps of the GA. However, most of the functionality called upon is encapsulated in the DNA class.

class DNA {

constructor(length) {

this.genes = [];

for (let i = 0; i < length; i++) {

this.genes[i] = randomCharacter();

}

this.fitness = 0;

}

Constructor (makes a random DNA)

getPhrase() {

return this.genes.join("");

}

Convert the array to a string of the phenotype.

calculateFitness(target) {

let score = 0;

for (let i = 0; i < this.genes.length; i++) {

if (this.genes[i] === target.charAt(i)) {

score++;

}

}

this.fitness = score / target.length;

}

Calculate fitness.

crossover(partner) {

let child = new DNA(this.genes.length);

let midpoint = floor(random(this.genes.length));

for (let i = 0; i < this.genes.length; i++) {

if (i < midpoint) {

child.genes[i] = this.genes[i];

} else {

child.genes[i] = partner.genes[i];

}

}

return child;

}

Crossover

mutate(mutationRate) {

for (let i = 0; i < this.genes.length; i++) {

if (random(1) < mutationRate) {

this.genes[i] = randomCharacter();

}

}

}

Mutation

}

function randomCharacter() {

let c = floor(random(32, 127));

return String.fromCharCode(c);

}

Return a random character (letter, number, symbol, space, and so forth).

In Example 9.1, you might notice that new child elements are directly added to the population array. This approach is possible because I have a separate mating pool array that contains references to the original parent elements. However, if I were to instead use the relay-race weightedSelection() function, I’d need to create a temporary array for the new population. This temporary array would hold the child elements and replace the original population array only after the reproduction step is completed. You’ll see this implemented in Example 9.2.

Exercise 9.6

Add features to Example 9.1 to report more information about the progress of the GA itself. For example, show the phrase closest to the target in each generation, as well as a report on the number of generations, the average fitness, and so on. Stop the GA after it has solved the phrase. Consider writing a Population class to manage the GA, instead of including all the code in draw().

Exercise 9.7

Explore the idea of a dynamic mutation rate. For example, try calculating a mutation rate that inversely correlates with the average fitness of the parent phrases so that higher fitness results in fewer mutations. Does this change affect the behavior of the overall system and how quickly the target phrase is found?

Customizing Genetic Algorithms

The nice thing about using GAs in a project is that example code can easily be ported from application to application. The core mechanics of selection and reproduction don’t need to change. However, you, the creator, will have to customize three key components of GAs for each use. This is crucial to moving beyond trivial demonstrations of evolutionary simulations (as in the Shakespeare example) to creative uses in projects that you make in p5.js and other programming environments.

Key 1: The Global Variables

The GA doesn’t have a lot of variables. If you look at the code in Example 9.1, you’ll see only two global variables (not including the arrays to store the population and mating pool):

let mutationRate = 0.01;

let populationSize = 150;

These two variables can greatly affect the behavior of the system, and it’s not such a good idea to arbitrarily assign them values (though tweaking them through trial and error is a perfectly reasonable way to arrive at optimal values).

I chose the values for the Shakespeare demonstration to virtually guarantee that the GA would solve for the phrase, but not too quickly (approximately 1,000 generations on average), so as to demonstrate the process over a reasonable period of time. A much larger population, however, would yield faster results (if the goal were algorithmic efficiency rather than demonstration). Here’s a table of some results:

| Population | Mutation | Number of Generations Until the Phrase Is Solved | Total Time (in Seconds) Until the Phrase Is Solved |

|---|---|---|---|

| 150 | 1% | 1,089 | 18.8 |

| 300 | 1% | 448 | 8.2 |

| 1,000 | 1% | 71 | 1.8 |

| 50,000 | 1% | 27 | 4.3 |

Notice that increasing the population size drastically reduces the number of generations needed to solve for the phrase. However, it doesn’t necessarily reduce the amount of time. Once the population balloons to 50,000 elements, the sketch begins to run slowly, given the amount of time required to process fitness and build a mating pool out of so many elements. (Of course, optimizations could be made should you require such a large population.)

In addition to the population size, the mutation rate can greatly affect performance.

| Population | Mutation | Number of Generations Until the Phrase Is Solved | Total Time (in Seconds) Until the Phrase Is Solved |

|---|---|---|---|

| 1,000 | 0% | 37 or never? | 1.2 or never? |

| 1,000 | 1% | 71 | 1.8 |

| 1,000 | 2% | 60 | 1.6 |

| 1,000 | 10% | Never? | Never? |

Without any mutation at all (0 percent), you just have to get lucky. If all the correct characters are present somewhere in an element of the initial population, you’ll evolve the phrase very quickly. If not, there’s no way for the sketch to ever reach the exact phrase. Run it a few times and you’ll see both instances. In addition, once the mutation rate gets high enough (10 percent, for example), so much randomness is involved (1 out of every 10 letters is random in each new child) that the simulation is pretty much back to a randomly typing cat. In theory, it will eventually solve the phrase, but you may be waiting much, much longer than is reasonable.

Key 2: The Fitness Function

Playing around with the mutation rate or population size is pretty easy and involves little more than typing numbers in your sketch. The real hard work of developing a GA is in writing the fitness function. If you can’t define your problem’s goals and evaluate numerically how well those goals have been achieved, you won’t have successful evolution in your simulation.

Before I move on to other scenarios exploring more sophisticated fitness functions, I want to look at flaws in my Shakespearean fitness function. Consider solving for a phrase that isn’t 18 characters long, but 1,000. And take two elements of the population, one with 800 characters correct and one with 801. Here are their fitness scores:

| Phrase | Characters Correct | Fitness |

|---|---|---|

| A | 800 | 80.0% |

| B | 801 | 80.1% |

This scenario has a couple of problems. First, I’m adding elements to the mating pool N times, where N equals fitness multiplied by 100. But objects can be added to an array only a whole number of times, so A and B will both be added 80 times, giving them an equal probability of being selected. Even with an improved solution that takes floating-point probabilities into account, 80.1 percent is only a teeny tiny bit higher than 80 percent. But getting 801 characters right is a whole lot better than 800 in the evolutionary scenario. I really want to make that additional character count. I want the fitness score for 801 characters to be substantially better than the score for 800.

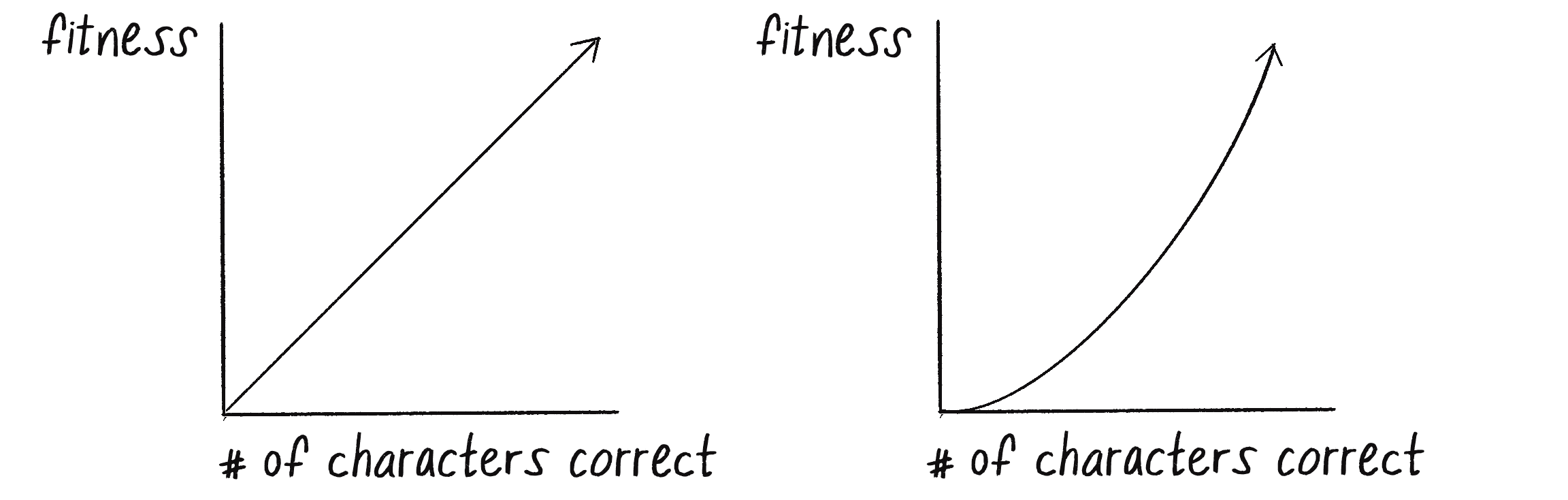

To put it another way, Figure 9.8 shows graphs of two possible fitness functions.

On the left is a linear graph; as the number of characters goes up, so does the fitness score. By contrast, in the graph on the right, as the number of characters goes up, the fitness score goes way up. That is, the fitness increases at an accelerating rate as the number of correct characters increases.

I can achieve this second type of result in various ways. For example, I could say this:

Here, the fitness scores increase quadratically, meaning proportional to the square of the number of correct characters. Say I have two members of the population, one with five correct characters and one with six. The number 6 is a 20 percent increase over the number 5. However, by squaring the correct characters, the fitness value will go from 25 to 36, a 44 percent increase:

| Correct Characters | Fitness |

|---|---|

| 5 | 25 |

| 6 | 36 |

Here’s another formula:

And here’s how that formula plays out as the number of correct characters increases:

| Correct Characters | Fitness |

|---|---|

| 1 | 2 |

| 2 | 4 |

| 3 | 8 |

| 4 | 16 |

Here, the fitness scores increase exponentially, doubling with each additional correct character.

Exercise 9.8

Rewrite the fitness function to increase quadratically or exponentially, according to the number of correct characters. Note that you’ll likely have to normalize the fitness values to a range from 0 to 1 so they can be added to the mating pool a reasonable number of times, or use a different weighted-selection method.

While this rather specific discussion of exponential versus linear equations is an important detail in the design of a good fitness function, I don’t want you to miss the more important point here: design your own fitness function! I seriously doubt that any project you undertake in p5.js with GAs will involve counting the correct number of characters in a string. In the context of this book, you’ll more likely be looking to evolve a creature that’s part of a physics system. Perhaps you’re looking to optimize the weights of steering behaviors so a creature can best escape a predator or avoid an obstacle or make it through a maze. You have to ask yourself what you’re hoping to evaluate.

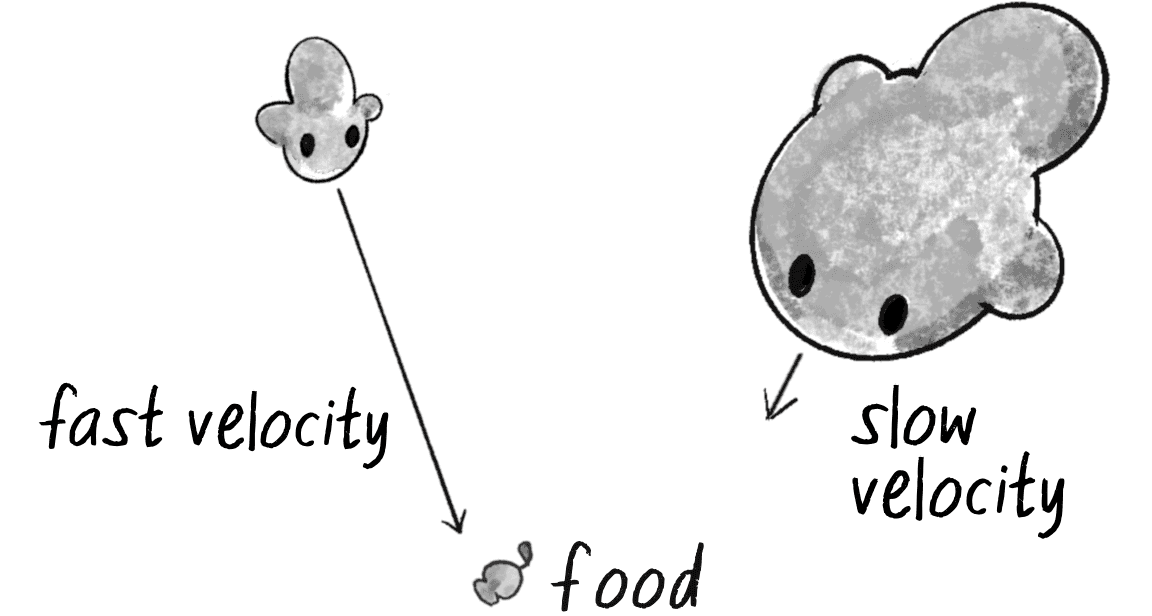

Consider a racing simulation in which a vehicle is evolving a design optimized for speed:

How about a mouse that’s evolving the optimal way to find a piece of cheese?

The design of computer-controlled players in a game is also a common scenario. Say you’re programming a soccer game in which the user is the goalie. The rest of the players are controlled by your program and have a set of parameters that determine how they kick a ball toward the goal. What would be the fitness score for any given player?

This, of course, is a simplistic take on the game of soccer, but it illustrates the point. The more goals a player scores, the higher its fitness, and the more likely its genetic information will appear in the next game. Even with a fitness function as simple as the one described here, this scenario is demonstrating something powerful—the adaptability of a system. If the players continue to evolve from game to game to game, when a new human user enters the game with a completely different strategy, the system will quickly discover that the fitness scores are going down and evolve a new optimal strategy. It will adapt. (Don’t worry, there’s very little danger of this resulting in sentient, soccer-playing robots that will enslave all humans.)

In the end, if you don’t have a fitness function that effectively evaluates the performance of the individual elements of your population, you won’t have any evolution. And the fitness function from one example will likely not apply to a totally different project. You have to design a function, sometimes from scratch, that works for your particular project. And where do you do this? All you have to edit are those few lines of code inside the method that computes the fitness variable:

calculateFitness() {

????????????

????????????

this.fitness = ??????????

}

Filling in those question marks is the part where you get to shine!

Key 3: The Genotype and Phenotype

The final key to designing your own GA relates to the way you choose to encode the properties of your system. What are you trying to express, and how can you translate that expression into a bunch of numbers? What is the genotype and phenotype?

I started with the Shakespeare example because of how easy it is to design both the genotype (an array of characters) and its expression, the phenotype (the string displayed on the canvas). It isn’t always this easy, however. For example, when talking about the fitness function for a soccer game, I happily assumed the existence of computer-controlled kickers that each have a “set of parameters that determine how they kick a ball toward the goal,” but actually determining what those parameters are and how you choose to encode them would require some thought and creativity. And of course, there’s no one correct answer: how you design the system is up to you.

The good news—and I hinted at this earlier in the chapter—is that you’ve been translating genotypes (data) into phenotypes (expression) all along. Anytime you write a class in p5.js, you make a whole bunch of variables:

class Vehicle {

constructor() {

this.maxspeed = ????;

this.maxforce = ????;

this.size = ????;

this.separationWeight = ????;

/* and more... */

}

All you need to do to evolve those variables is to turn them into an array, so that the array can be used with all the methods—crossover(), mutate(), and the like—found in the DNA class. One common solution is to use an array of floating-point numbers from 0 to 1:

class DNA {

constructor(length) {

this.genes = [];

for (let i = 0; i < length; i++) {

An empty array

this.genes[i] = random(1);

Always pick a number from 0 to 1.

}

}

}

Notice that I’ve now put the raw genetic data (genotype) and its expression (phenotype) into two separate classes. The DNA class is the genotype—it’s just a bunch of numbers. The Vehicle class is the phenotype—it’s an expression of how to turn those numbers into animated, visual behaviors. The two can be linked by including a DNA instance inside the Vehicle class:

class Vehicle {

constructor() {

this.dna = new DNA(4);

A DNA object embedded into the Vehicle class

this.maxspeed = dna.genes[0];

this.maxforce = dna.genes[1];

this.size = dna.genes[2];

this.separationWeight = dna.genes[3];

Use the genes to set variables.

/* and more... */

}

Of course, you most likely don’t want all your variables to have a range from 0 to 1. But rather than try to remember how to adjust those ranges in the DNA class, it’s easier to pull the original genetic information from the DNA object and then use p5.js’s map() function to change the range as needed for your phenotype. For example, if you want a size variable between 10 and 72, you would say this:

this.size = map(this.dna.genes[2], 0, 1, 10, 72);

In other cases, you may want to design a genotype that’s an array of objects. Consider the design of a rocket with a series of thruster engines. You could consider each thruster to be a vector that describes its direction and relative strength:

class DNA {

constructor(length) {

this.genes = [];

for (let i = 0; i < length; i++) {

The genotype is an array of vectors.

this.genes[i] = p5.Vector.random2D();

A vector pointing in a random direction

this.genes[i].mult(random(10));

And scaled randomly

}

}

}

The phenotype would be a Rocket class that participates in a physics system:

class Rocket {

constructor() {

this.dna = ????;

/* and more... */

}

}

What’s great about dividing the genotype and phenotype into separate classes (DNA and Rocket, for example) is that when it comes time to build all the code, you’ll notice that the DNA class I developed earlier remains intact. The only thing that changes is the kind of data stored in the array (numbers, vectors, and so on) and the expression of that data in the phenotype class.

In the next section, I’ll follow this idea a bit further and walk through the necessary steps to implement an example that involves moving bodies and an array of vectors as DNA.

Evolving Forces: Smart Rockets

I mentioned rockets for a specific reason: in 2009, Jer Thorp released a GAs example on his blog titled “Smart Rockets.” Thorp pointed out that the National Aeronautics and Space Administration (NASA) uses evolutionary computing techniques to solve all sorts of problems, from satellite antenna design to rocket-firing patterns. This inspired him to create a Flash demonstration of evolving rockets.

Here’s the scenario: a population of rockets launches from the bottom of the screen with the goal of hitting a target at the top of the screen. Obstacles block a straight-line path to the target (see Figure 9.9).

Each rocket is equipped with five thrusters of variable strength and direction (Figure 9.10). The thrusters don’t fire all at once and continuously; rather, they fire one at a time in a custom sequence.

In this section, I’m going to evolve my own simplified smart rockets, inspired by Thorp’s. When I get to the end of the section, I’ll leave implementing some of Thorp’s additional advanced features as an exercise.

My rockets will have only one thruster, which will be able to fire in any direction with any strength for every frame of animation. This isn’t particularly realistic, but it will make building out the example a little easier. (You can always make the rocket and its thrusters more advanced and realistic later.)

Developing the Rockets

To implement my evolving smart rockets, I’ll start by taking the Mover class from Chapter 2 and renaming it Rocket:

class Rocket {

constructor(x, y) {

this.position = createVector(x, y);

this.velocity = createVector(0, 0);

this.acceleration = createVector(0, 0);

A rocket has three vectors: position, velocity, and acceleration.

}

applyForce(force) {

this.acceleration.add(force);

}

Accumulate forces into acceleration (Newton’s second law).

update() {

A simple physics engine (Euler integration)

this.velocity.add(this.acceleration);

Velocity changes according to acceleration.

this.position.add(this.velocity);

Position changes according to velocity.

this.acceleration.mult(0);

}

}

With this class, I can move the rocket by calling applyForce() with a new force for every frame of animation. The thruster applies a single force to the rocket each time through draw(). But at this point, I’m far from done. To make my rockets “smart” and evolvable, I need to think about the three keys to programming a custom GA, as outlined in the previous section.

Key 1 is to define the right global variables for the population size and mutation rate. I’m going to hold off on worrying too much about these variables for now and arbitrarily choose reasonable-sounding numbers—perhaps a population of 50 rockets and a mutation rate of 1 percent. Once I’ve built out the system and have my sketch up and running, I can experiment with these numbers.

Key 2 is to develop an appropriate fitness function. In this case, the goal of a rocket is to reach its target. The closer a rocket gets to the target, the higher its fitness. Fitness is therefore inversely proportional to distance: the smaller the distance, the greater the fitness, and the greater the distance, the smaller the fitness.

To put this into practice, I first need to add a property to the Rocket class to store its fitness:

class Rocket {

constructor(x, y) {

this.fitness = 0;

A rocket has fitness.

this.position = createVector(x, y);

this.velocity = createVector(0, 0);

this.acceleration = createVector(0, 0);

}

Next, I need to add a method to calculate the fitness to the Rocket class. After all, only a Rocket object knows how to compute its distance to the target, so the fitness function should live in this class. Assuming I have a target vector, I can write the following:

calculateFitness() {

let distance = p5.Vector.dist(this.position, target);

How close did the rocket get?

this.fitness = 1 / distance;

Fitness is inversely proportional to distance.

}

This is perhaps the simplest fitness function I could write. By dividing 1 by the distance, large distances become small numbers and small distances become large. If I want to use my quadratic trick from the previous section, I could divide 1 by the distance squared instead:

calculateFitness() {

let distance = p5.Vector.dist(position, target);

this.fitness = 1 / (distance * distance);

1 divided by distance squared

}

I’ll want to make several additional improvements to the fitness function, but this is a good start.

Finally, Key 3 is to think about the relationship between the genotype and the phenotype. I’ve stated that each rocket has a thruster that fires in a variable direction with a variable magnitude—in other words, a vector! The genotype, the data required to encode the rocket’s behavior, is therefore an array of vectors, one for each frame of the animation:

class DNA {

constructor(length) {

this.genes = [];

for (let i = 0; i < length; i++) {

this.genes[i] = createVector(0, 0);

}

}

}

The happy news here is that I don’t really have to do anything else to the DNA class. All the functionality for the typing cat (crossover and mutation) still applies. The one difference I do have to consider is how to initialize the array of genes. With the typing cat, I had an array of characters and picked a random character for each element of the array. Now I’ll do exactly the same thing and initialize a DNA sequence as an array of random vectors.

Your instinct in creating a random vector might be as follows:

let v = createVector(random(-1, 1), random(-1, 1));

This code is perfectly fine and will likely do the trick. However, if I were to draw every single possible vector that could be picked, the result would fill a square (see Figure 9.11, left). In this case, it probably doesn’t matter, but there’s a slight bias to the diagonals given that a vector from the center of a square to a corner is longer than a purely vertical or horizontal one.

p5.Vector.random2D() (right)As you may recall from Chapter 3, a better choice is to pick a random angle and create a vector of length 1 from that angle. This produces results that form a circle (see the right of Figure 9.11) and can be achieved with polar-to-Cartesian conversion or the trusty p5.Vector.random2D() method:

for (let i = 0; i < length; i++) {

this.genes[i] = p5.Vector.random2D();

A random unit vector

}

A vector of length 1 would actually create quite a large force. Remember, forces are applied to acceleration, which accumulates into velocity 30 times per second (or whatever the frame rate is). Therefore, for this example, I’ll add another variable to the DNA class, a maximum force, and randomly scale all the vectors to be somewhere from 0 to the maximum. This will control the thruster power:

class DNA {

constructor() {

this.genes = [];

The genetic sequence is an array of vectors.

this.maxForce = 0.1;

How strong can the thrusters be?

for (let i = 0; i < lifeSpan; i++) {

this.genes[i] = p5.Vector.random2D();

Notice that the length of genes is equal to a global lifeSpan variable.

this.genes[i].mult(random(0, this.maxforce));

Scale the vectors randomly, but not stronger than the maximum force.

}

}

Notice that I’m using lifeSpan to set the length of genes, the array of vectors. This global variable stores the total number of frames in each generation’s life cycle, allowing me to create a vector for each frame of the rocket’s life.

The expression of this array of vectors, the phenotype, is my Rocket class. To cement the connection, I need to add an instance of a DNA object to the class:

class Rocket {

constructor(x, y, dna) {

this.dna = dna;

A rocket has DNA.

this.fitness = 0;

A rocket has fitness.

this.position = createVector(x, y);

this.velocity = createVector(0, 0);

this.acceleration = createVector(0, 0);

}

What am I using this.dna for? As the rocket launches, it marches through the array of vectors and applies them one at a time as a force. To achieve this, I’ll need to include the variable this.geneCounter to help step through the array:

class Rocket {

constructor(x, y, dna) {

this.dna = dna;

A rocket has DNA.

this.fitness = 0;

A rocket has fitness.

this.geneCounter = 0;

A counter for the DNA genes array

this.position = createVector(x, y);

this.velocity = createVector(0, 0);

this.acceleration = createVector(0, 0);

}

run() {

this.applyForce(this.dna.genes[this.geneCounter]);

Apply a force from the genes array.

this.geneCounter++;

Go to the next force in the genes array.

this.update();

Update the rocket’s physics.

}

}

Now I have a DNA class (genotype) and a Rocket class (phenotype). The last piece of the puzzle is a mechanism for managing the population of rockets and implementing selection and reproduction.

Managing the Population

To keep my sketch.js file tidier, I’ll put the code for managing the array of Rocket objects in a Population class. As with the DNA class, the happy news is that I barely have to change anything from the typing cats example. I’m just organizing the code in a more object-oriented way, with a selection() method and a reproduction() method. For the sake of demonstrating a different technique, I’ll also normalize the fitness values in selection() and use the weighted-selection (relay-race) algorithm in reproduction(). This eliminates the need for a separate mating-pool array. The weightedSelection() code is the same as that written earlier in the chapter:

class Population {

constructor(mutation, length) {

Population has variables to keep track of the mutation rate, current

population array, and number of generations.

this.mutationRate = mutation;

Mutation rate

this.population = [];

Array to hold the current population

this.generations = 0;

Number of generations

for (let i = 0; i < length; i++) {

this.population[i] = new Rocket(320, 220, new DNA());

}

}

fitness() {

for (let rocket of this.population) {

rocket.calculateFitness();

}

}

Calculate the fitness for each rocket.

selection() {

The selection method normalizes all the fitness values.

let totalFitness = 0;

for (let rocket of this.population) {

totalFitness += rocket.fitness;

}

Sum all the fitness values.

for (let rocket of this.population) {

rocket.fitness /= totalFitness;

}

Divide by the total to normalize the fitness values.

}

reproduction() {

let newPopulation = [];

Separate the array for the next generation.

for (let i = 0; i < this.population.length; i++) {

let parentA = this.weightedSelection();

let parentB = this.weightedSelection();

Now use the weighted selection algorithm.

let child = parentA.crossover(parentB);

child.mutate(this.mutationRate);

newPopulation[i] = new Rocket(320, 240, child);

Rocket goes in the new population.

}

this.population = newPopulation;

Now the new population is the current one.

}

I need to make one more fairly significant change, however. With typing cats, a random phrase was evaluated as soon as it was created. The string of characters had no life span; it existed purely for the purpose of calculating its fitness. The rockets, however, need to live for a period of time before they can be evaluated—that is, they need to be given a chance to make their attempt at reaching the target. Therefore, I need to add one more method to the Population class that runs the physics simulation. This is identical to what I did in the run() method of a particle system—update all the particle positions and draw them:

live() {

for (let rocket of this.population) {

rocket.run();

The run() method takes care of the simulation, updates the rocket’s

position, and draws it to the canvas.

}

}

}

Finally, I’m ready for setup() and draw(). Here, my primary responsibility is to implement the steps of the GA in the appropriate order by calling the methods from the Population class:

population.fitness();

population.selection();

population.reproduction();

However, unlike the Shakespeare example, I don’t want to do this every frame. Rather, my steps work as follows:

- Create a population of rockets.

- Let the rockets live for N frames.

- Evolve the next generation:

- Selection

- Reproduction

- Return to step 2.

To know when to go from step 2 to 3, I need a lifeCounter variable that tracks the current generation’s progress, along with the lifeSpan variable. In draw(), while lifeCounter is less than lifeSpan, the population’s live() method is called to run the simulation. Once lifeCounter hits lifeSpan, it’s time for fitness(), selection(), and reproduction() to evolve a new generation of rockets.

Example 9.2: Smart Rockets

let lifeSpan = 500;

How many frames does a generation live for?

let lifeCounter = 0;

Keep track of the life span.

let population;

The population

function setup() {

createCanvas(640, 240);

population = new Population(0.01, 50);

Step 1: Create the population. Try different values for the mutation rate and population size.

}

function draw() {

background(255);

if (lifeCounter < lifeSpan) {

The revised GA

population.live();

lifeCounter++;

Step 2: The rockets live their lives until lifeCounter reaches lifeSpan.

} else {

lifeCounter = 0;

population.fitness();

population.selection();

population.reproduction();

When lifeSpan is reached, reset lifeCounter and evolve the next

generation (steps 3 and 4, selection and reproduction).

}

}

function mousePressed() {

target.x = mouseX;

target.y = mouseY;

}

Move the target if the mouse is clicked. The rockets will adapt to the new target.

At the bottom of the code, you’ll see that I’ve added a new feature: when the mouse is clicked, the target position is moved to the coordinates of the mouse cursor. This change allows you to observe how the rockets adapt and adjust their trajectories toward the new target position as the system continuously evolves in real time.

Making Improvements

My smart rockets work but aren’t particularly exciting yet. After all, the rockets simply evolve toward having DNA with a bunch of vectors that point straight at the target. To make the example more interesting, I’m going to suggest two improvements. For starters, when I first introduced the smart rocket scenario, I said the rockets should evolve the ability to avoid obstacles. Adding this feature will make the system more complex and demonstrate the power of the evolutionary algorithm more effectively.

To evolve obstacle avoidance, I need some obstacles to avoid. I can easily create rectangular, stationary obstacles by implementing a class of Obstacle objects that store their own position and dimensions:

class Obstacle {

constructor(x, y, w, h) {

this.position = createVector(x, y);

this.w = w;

this.h = h;

}

I’ll add a contains() method to the Obstacle class that returns true if a rocket has hit the obstacle, or false otherwise:

contains(spot) {

return (

spot.x > this.position.x &&

spot.x < this.position.x + this.w &&

spot.y > this.position.y &&

spot.y < this.position.y + this.h

);

}

If I create an array of Obstacle objects, I can then have each rocket check to see whether it has collided with each obstacle. If a collision occurs, the rocket can set the Boolean flag hitObstacle to true. To achieve this, I need to add a method to the Rocket class:

checkObstacles(obstacles) {

for (let obstacle of obstacles) {

if (obstacle.contains(this.position)) {

this.hitObstacle = true;

}

}

}

This new method lives in the Rocket class and checks whether a rocket has

hit an obstacle.

If the rocket hits an obstacle, I’ll stop the rocket from updating its position. The revised run() method now receives an obstacles array as an argument:

run(obstacles) {

if (!this.hitObstacle) {

this.applyForce(this.dna.genes[this.geneCounter]);

this.geneCounter = (this.geneCounter + 1);